Have a question?

Have a question?

(+1) 650 566 8833

Many companies use multiple cloud vendors, each offering a variety of fully managed relational, NoSQL, and in-memory databases. Additionally, departmental projects are adopting SaaS applications at an ever-increasing pace, and your organization still needs to maintain on-premises systems that are vital to business operations. These distributed data silos make it difficult to get a complete view of the business and slow digital initiatives. In this new hybrid/multi-cloud environment, organizations need improved cloud data integration. They need to modernize their data architecture to support the changing needs of business.

Without requiring the physical copying or replicating of data, the Denodo Platform logically connects to disparate data sources, creating a centralized data access layer enabling anyone to find, integrate, and share data securely, in real time, regardless of where the data may reside , and users can access data when they need it, and in the language of the business.

When data is constantly produced in massive quantities and is always in motion and constantly changing (e.g., IoT platforms and data lakes), attempts to collect all this data are neither practical nor viable. This is driving an increase in demand for connection to data, not just the collection of it (Data Virtualization)."

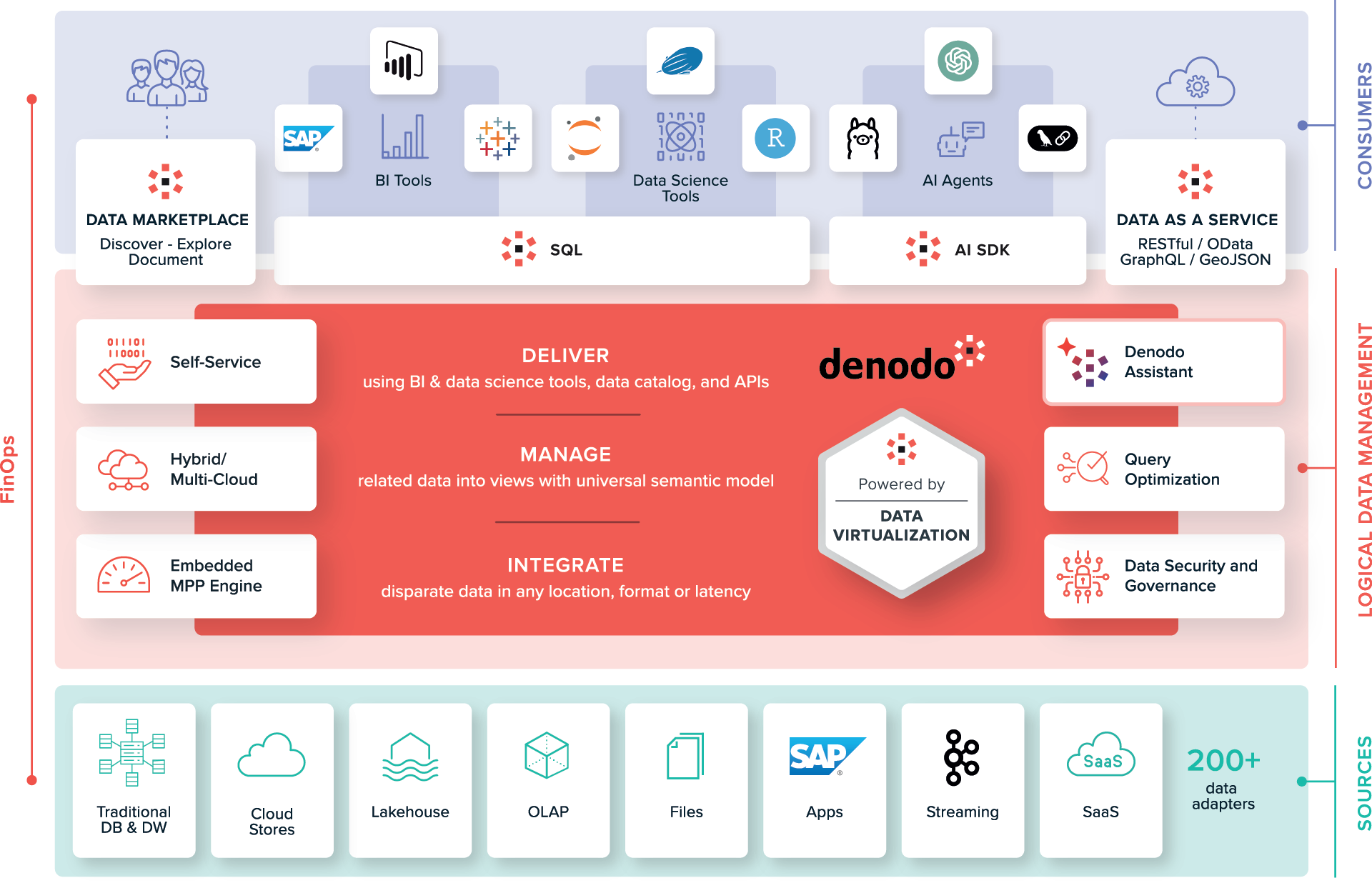

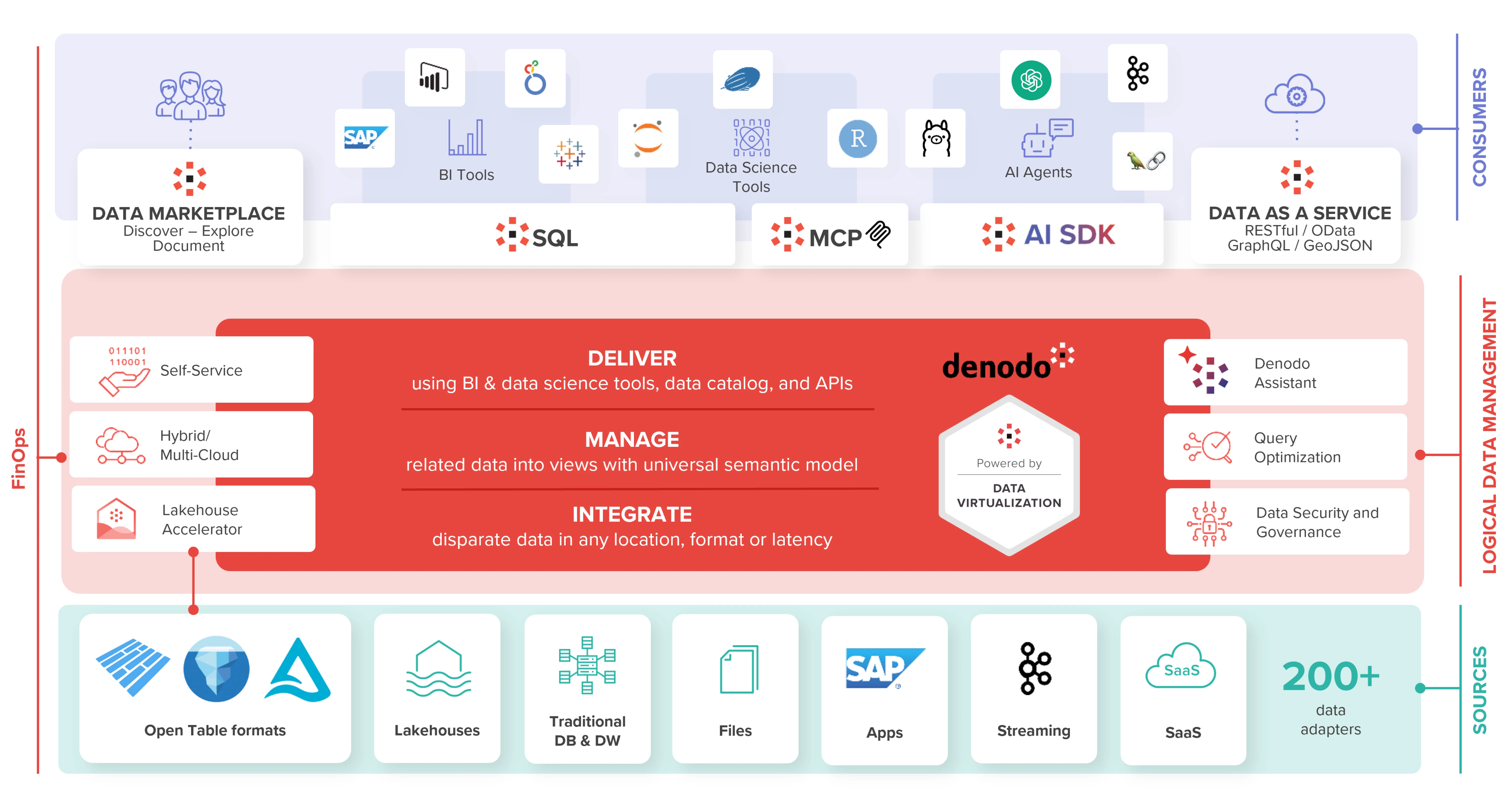

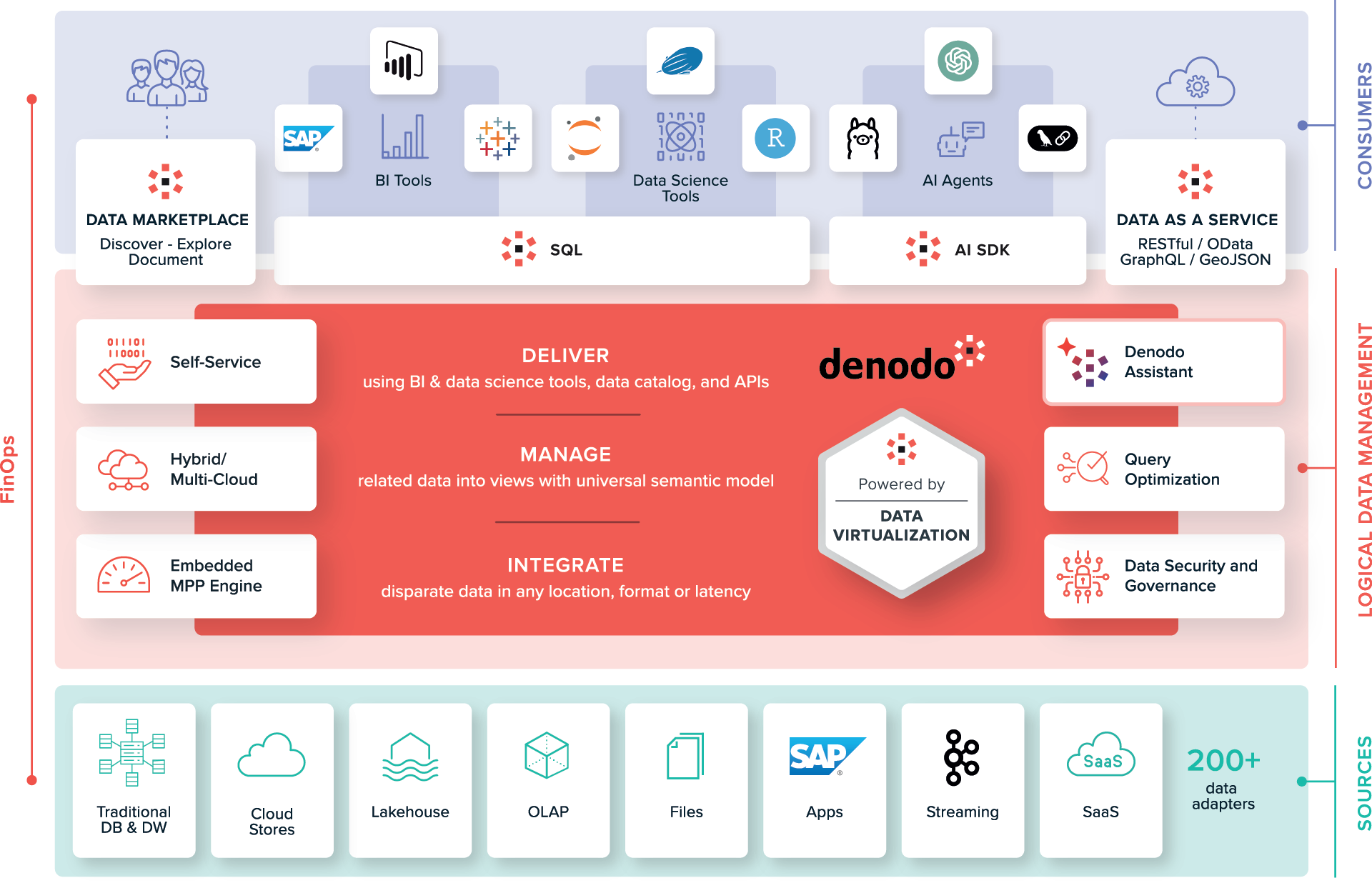

A logical data management, data integration, and data delivery solution that provides real-time access to curated, high-quality data across multiple cloud systems. Powered by data virtualization, the Denodo Platform establishes a logical abstraction layer across all cloud data assets that enables immediate integration and access to any dataset without needing to first copy or replicate it. When a user connects to data and requests it, the Denodo Platform retrieves that data from one or more cloud systems in real time, integrates it into business-friendly views, and delivers it to the user. With a broad set of data delivery options, including JDBC, ODBC, ADO.NET, SOAP, RESTful web services, OData, GraphQL, GeoJSON, exports to Microsoft Excel/SQL, Tableau Data Extracts, and JMS message queues, users can access data in their preferred ways.

The centralized logical data access layer enables anyone to quickly and easily access data regardless of where it resides across multiple cloud systems, without requiring assistance from IT.

The data catalog enables users to browse, discover, and use data assets located across multiple cloud systems. With an AI-powered recommendations engine, tools to aid collaboration, and smart-search features, users know they can trust the data they discover.

The logical data access layer simplifies the data management process for IT and security teams with a centralized point from which to monitor and manage all data access across all cloud systems.

The Denodo Platform offers hybrid/multi-cloud support and over 150connectors to a wide variety of 3rd party systems, including:

With this support, organizations can quickly and easily integrate their entire cloud data landscapes.

Let the Denodo Platform manage your cloud-based PaaS environment, including cluster configuration (TLS, load-balancing, autoscaling, etc.), start/stop controls, automatic installation of updates, and integrated monitoring.

Leverage an easy-to-deploy procurement model that is available on the marketplace of your organization’s preferred cloud services provider. Leverage Denodo subscription costs against any cloud-vendor enterprise discount programs in place with options by the hour, or by annual/multi-year subscriptions. Additionally, get this same easy-to-deploy model via the marketplaces and leverage the licenses you procure from Denodo with the BYOL (bring your own license) option.

The Denodo Platform integrates with all major cloud service providers, simplifying installation and administration and enhancing connectivity with key data services. It unifies hybrid, multi-cloud environments, streamlining operations across diverse platforms, simplifying cloud data integration.

Struggling with fragmented data across multiple clouds and on-premises systems? The Denodo Platform provides real-time access to high-quality data without physical replication. Learn how you can achieve seamless cloud data access, discovery, and centralized control to accelerate your digital initiatives.

Download the Denodo Platform to explore, learn, and build with governed data access.

DOWNLOAD FOR FREEExperience the full Denodo Platform with Agora, our fully managed cloud service.

START FREE TRIAL